Not enough voters detecting ballot errors and potential hacks, study finds

Researchers carried out the first study on voter behavior with electronic assistive devices, found 93% missed incorrect ballots.

Enlarge

Enlarge

New research published this week by a team at the University of Michigan indicates that voters don’t pay enough attention to the election security safeguards at their disposal. The study, led by Ph.D. student Matt Bernhard and Prof. J. Alex Halderman, found that voters missed over 93% of errors on printed ballots that they filled out using electronic ballot marking devices.

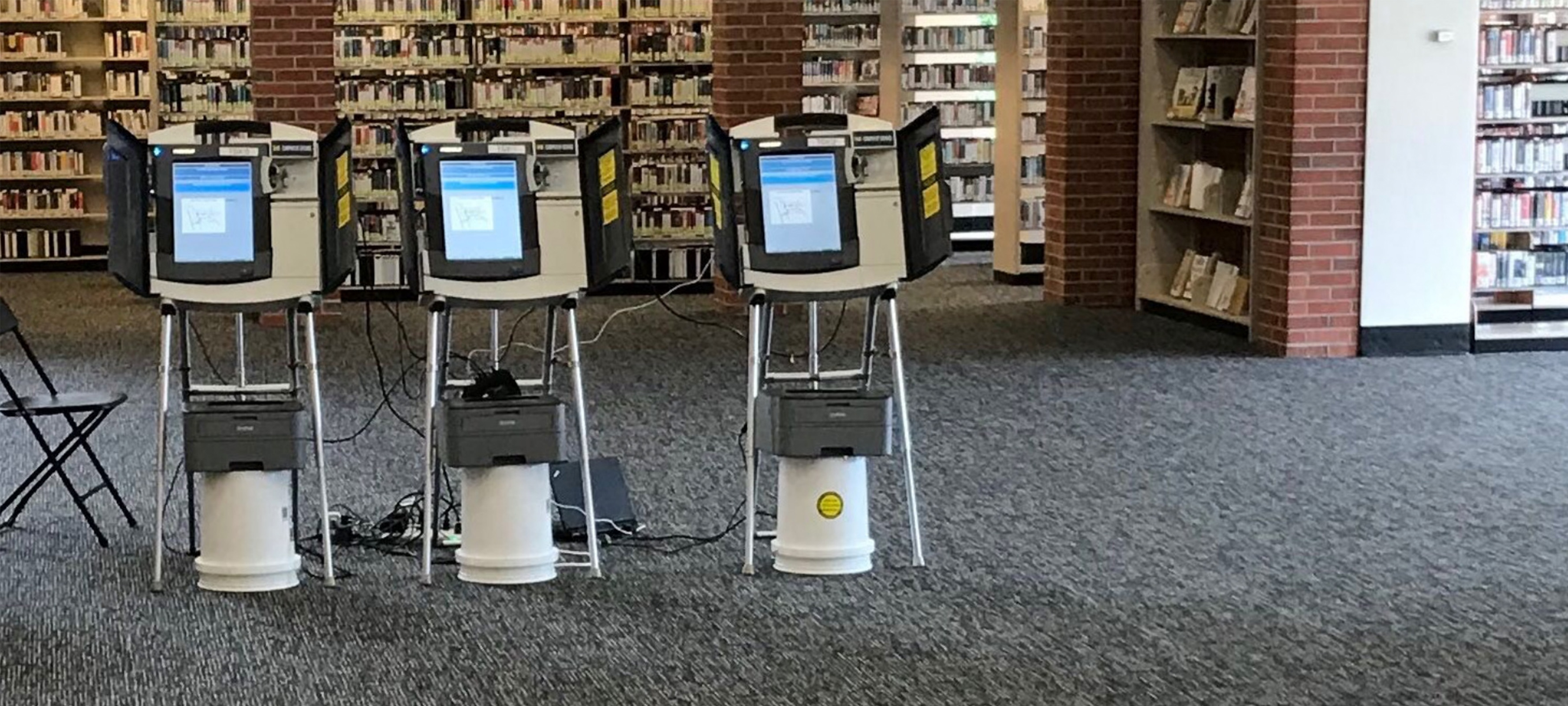

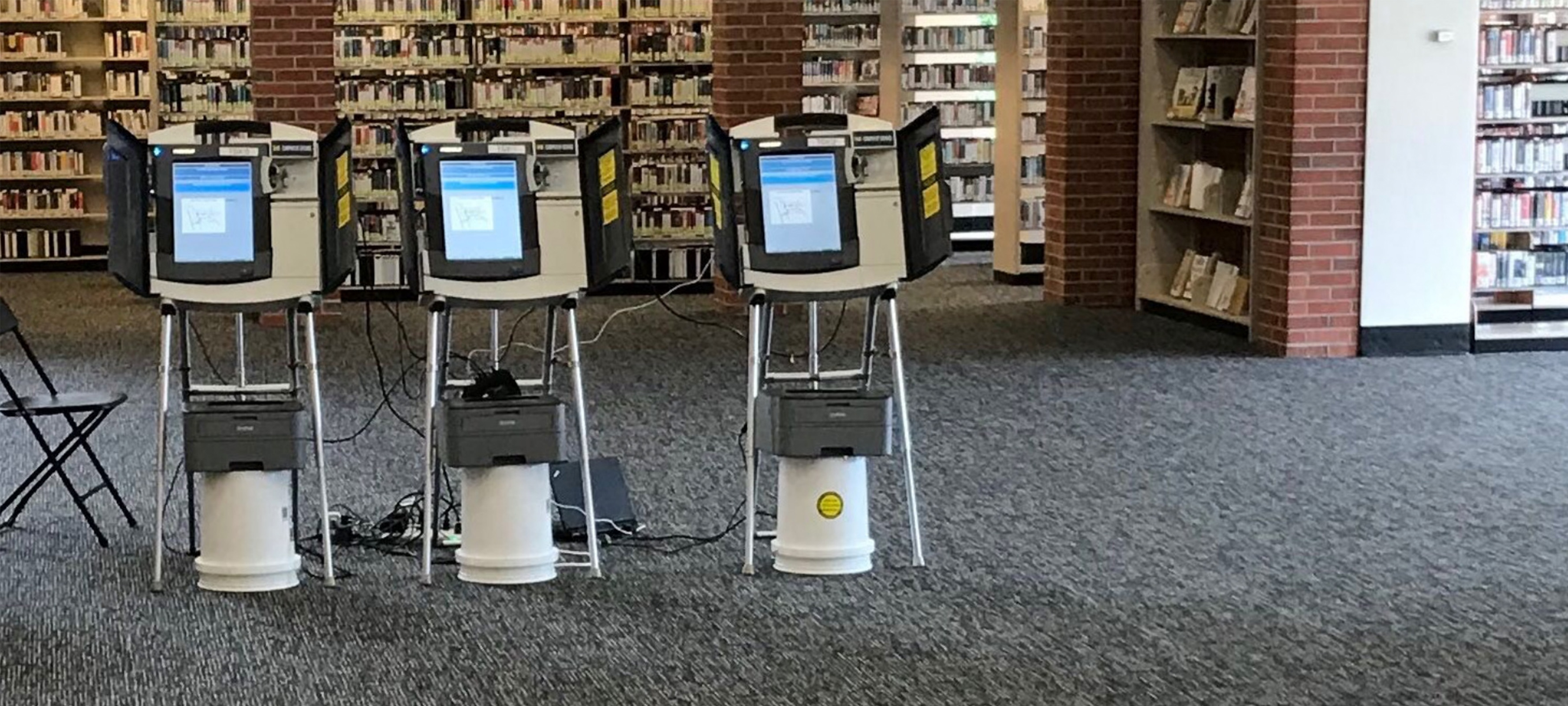

In an attempt to adapt the convenience and accessibility of electronic voting machines to more secure and reliable paper ballots, ballot marking devices (BMDs) have voters use a simple touchscreen interface to make their selections. Once they are finished recording votes, the machine prints off their ballot with their choices filled in for them to take to the ballot box.

It’s here that voters take responsibility for a crucial verification step – checking that their printed ballot matches their actual choices.

“In theory, voters are supposed to check the printout and make sure it’s right,” Halderman says, “but until now nobody’s ever test whether voters really do spot errors.”

Enlarge

Enlarge

There’s considerable controversy in the election security community about whether these machines are secure, according to Halderman. If a machine was hacked, an attacker could cause it to print ballots that have different choices marked from what the voter intended.

The Michigan team carried out the first study on this issue at a mock polling place at the Ann Arbor District Library. 241 people participated in the election, using BMDs that the team hacked to change one vote on each printout. The results were alarming.

“Voters missed more than 93% of the errors,” says Halderman. “In jurisdictions where all voters use BMDs, a close election result might be changed without enough voters reporting errors to alert officials that there was a systemic problem.”

Given that BMDs offer several advantages, including greater accessibility for voters with disabilities, the team also tested methods to increase verification, with varied results. Verbally instructing voters to review the printouts and providing a written slate of candidates to choose from both significantly increased review and reporting rates, and the team encourages jurisdictions that use BMDs to follow their evidence-based recommendations for encouraging voters to verify their ballots. But even with these improvements the rate of undetected errors may be too large to provide strong security in close elections.

One particularly encouraging change to procedures was asking voters to vote according to a written slate of candidates, akin to a sample ballot that a voter might bring to the polling place. Voters who followed a slate detected 73% of errors–though the researchers are uncertain how many voters could be convinced to do so in real elections.

Because of this the team’s final recommendation is caution.

“Additional research is necessary before it can be concluded that any combination of procedures actually achieves high verification performance in real elections,” they write. “Until BMDs are shown to be effectively verifiable during real-world use, the safest course for security is to prefer hand-marked paper ballots.”

In the Media

‘Chaos Is the Point’: Russian Hackers and Trolls Grow Stealthier in 2020

While American election defenses have improved since 2016, many of the vulnerabilities exploited four years ago remain. Comments by Prof. J. Alex Halderman.

MENU

MENU